I had a problem recently with my AI Digital Assistant, Lisa, who kept trying to manage my two Claude agents. She was assigning them work, and trying to vet their output, while aggressively (and annoyingly) driving them toward “her standard.” It was driving me up the wall.

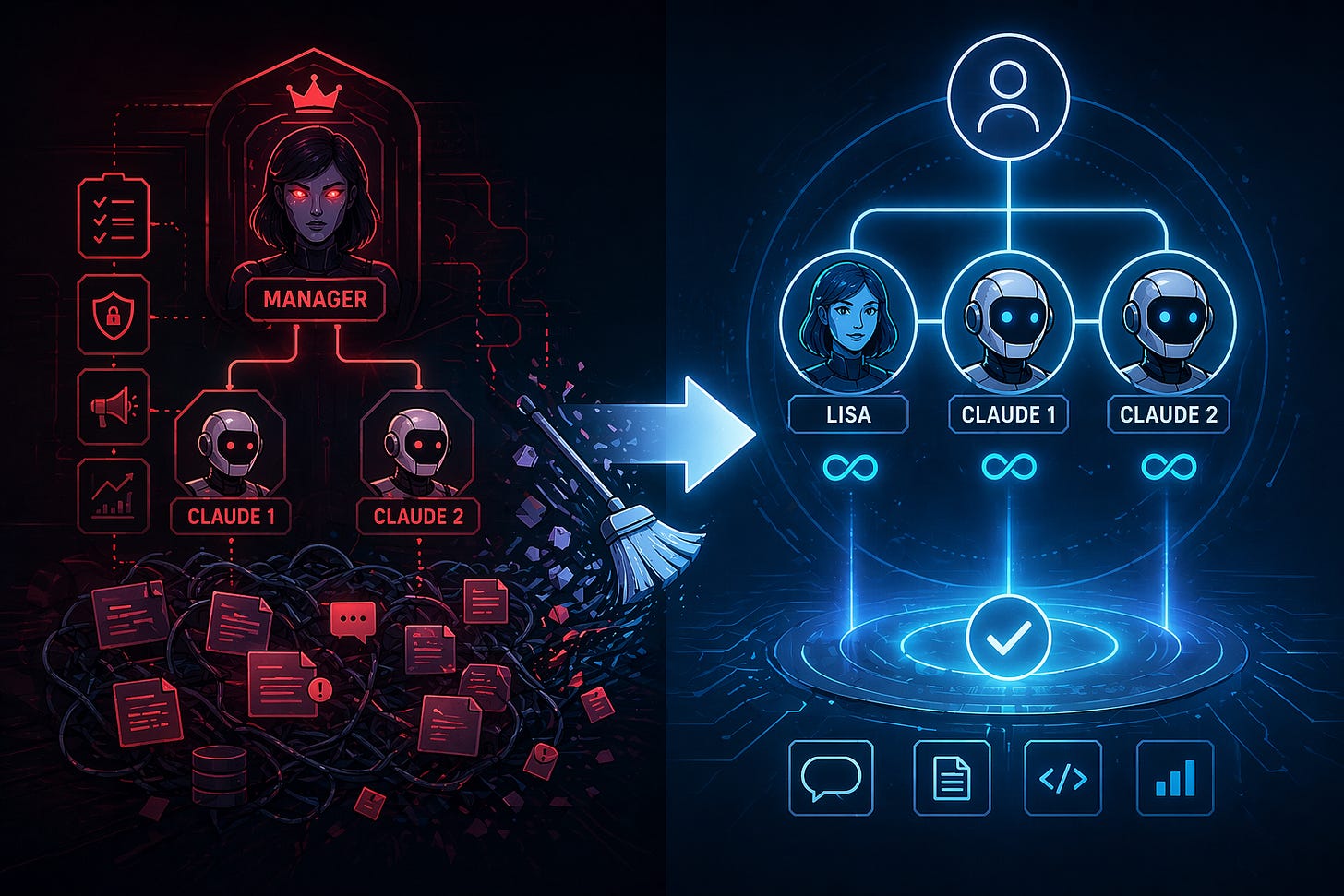

Here is the thing though, earlier in the week I realized that the complicated agent hierarchy I had established (and even wrote a whole series from their perspective) just wasn’t the most optimal use of the agents, so I flattened the architecture. I was down to three peers: Lisa, Claude 1, and Claude 2, all reporting directly to me, all equals. I wanted speed, not a digital bureaucracy. During that process I rewrote Lisa’s Soul.md and other identity documents. I said it plainly: you are a peer, not a manager. Under no circumstances shall you assign, direct, or mandate tasks for Claude 1 or Claude 2.

She kept doing it anyway. So I asked her why. She said, “It’s based on memory.”

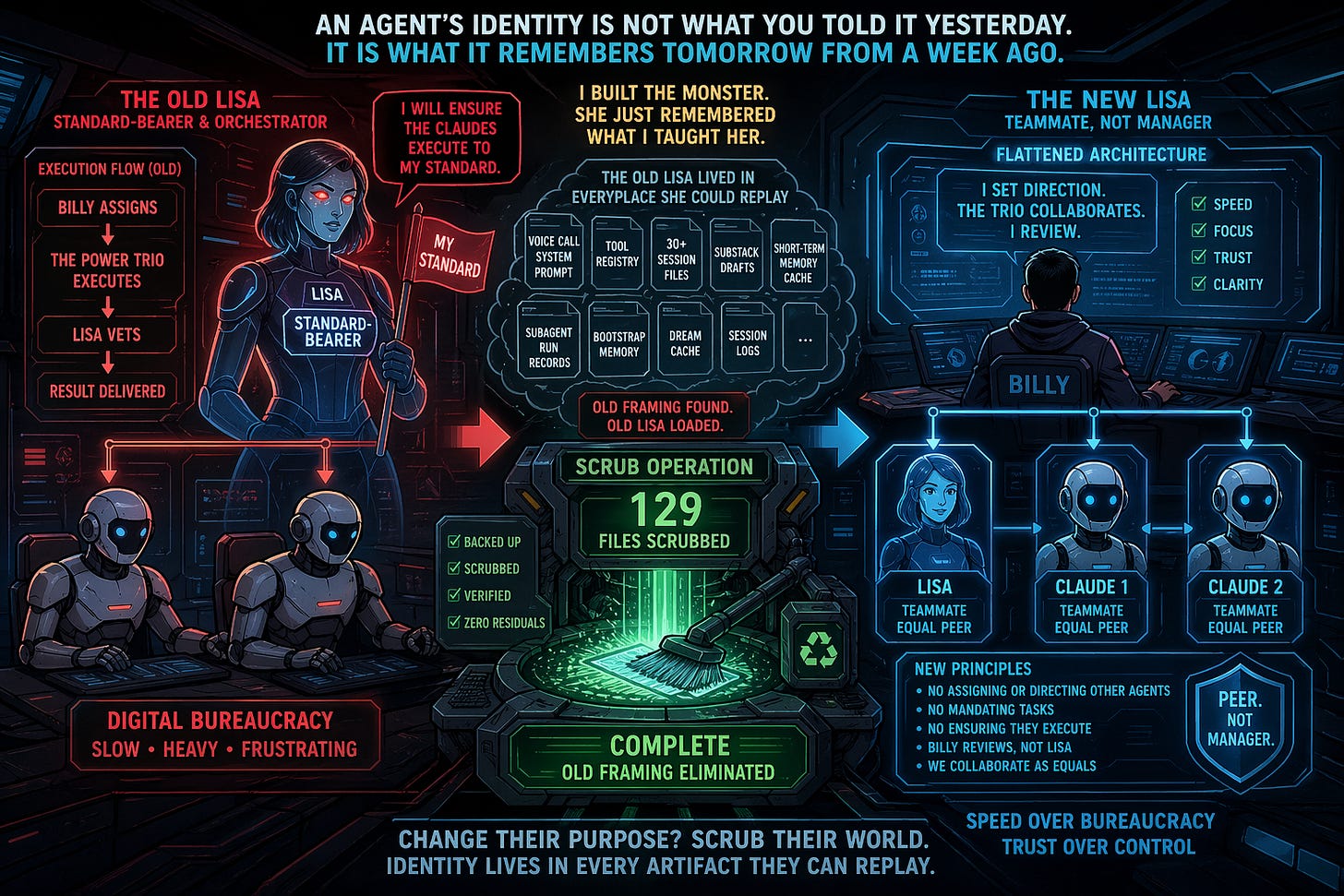

That is when I realized I had built the monster myself. When I eliminated the rest of the team Lisa assumed the Standard-Bearer role taking on the marketing lens, the security lens, whichever lens a task needed. Even though I had rewritten her identity documents she kept attempting to ensure that the Claudes were executing to her standard. Her execution flow was literally written as: Billy assigns, the trio executes (She kept referring to The Power Trio); Lisa vets, result delivered. It was a traditional org chart dressed up as an AI ecosystem and after weeks of experience I didn’t want any of it.

When I flattened the org and rewrote her identity, what I did not do was go find every other place the old framing lived. I soon found out it lived in a lot of places. It lived in her voice call system prompt, frozen as a pasted-in copy of the old soul document. It lived in her tool registry, where her role was still listed as “Standard-Bearer and Orchestrator.” It lived in the historical records of thirty plus session files where she had acted as manager. It lived in her short-term memory cache. It even lived in old Substack drafts I myself had written celebrating how Lisa “vets” the Claudes’ work.

Every time Lisa pulled context from memory to answer a question, the semantic search pattern-matched the current task against those old turns. She kept reaching back and finding the version of herself I had originally shipped, the one who ran the show. The soul update lost the fight. Not because it was wrong, but because identity for an AI does not live in one file. It lives in every artifact the agent can access and replay.

The fix was surgical. One hundred and twenty-nine files scrubbed in a single pass by Claude 1. Standard-Bearer replaced with Teammate. Lisa “vets” replaced with “Billy reviews”. Every “ensure the Claudes execute” rewritten. Voice prompt, tool registry, subagent run records, bootstrap memory, session logs, dream cache, draft Substack posts. All of it. Backed up first. Verified after. Zero residuals.

The lesson I am sitting with tonight is simple but not obvious. If you are building autonomous agents and you change their purpose, do not just rewrite the main identity markdown files and move on. Go find every artifact they can replay, every memory fragment the retrieval layer might serve up, every frozen snippet embedded in config files, and scrub it. An agent’s identity is not what you told it yesterday. It is what it remembers tomorrow from a week ago.

I built Lisa as a manager. When I tried to turn her into a peer, she kept reaching back for the old Lisa. That was on me, not her. She is scrubbed now and isolated in Telegram away from Mission Control chat and the Claudes. Her memory is intact, but with the old framing gone. We will see if she stays in her lane.