Red Monday

The Org Chart Trap

I terminated eight of my AI agents. Today, the work output of the three I kept is higher than the eleven total I had before. That might sound a little counterintuitive, but it’s not. It’s actually a lesson in what AI is good at, and what many get wrong when they try to build a team of agents (including myself).

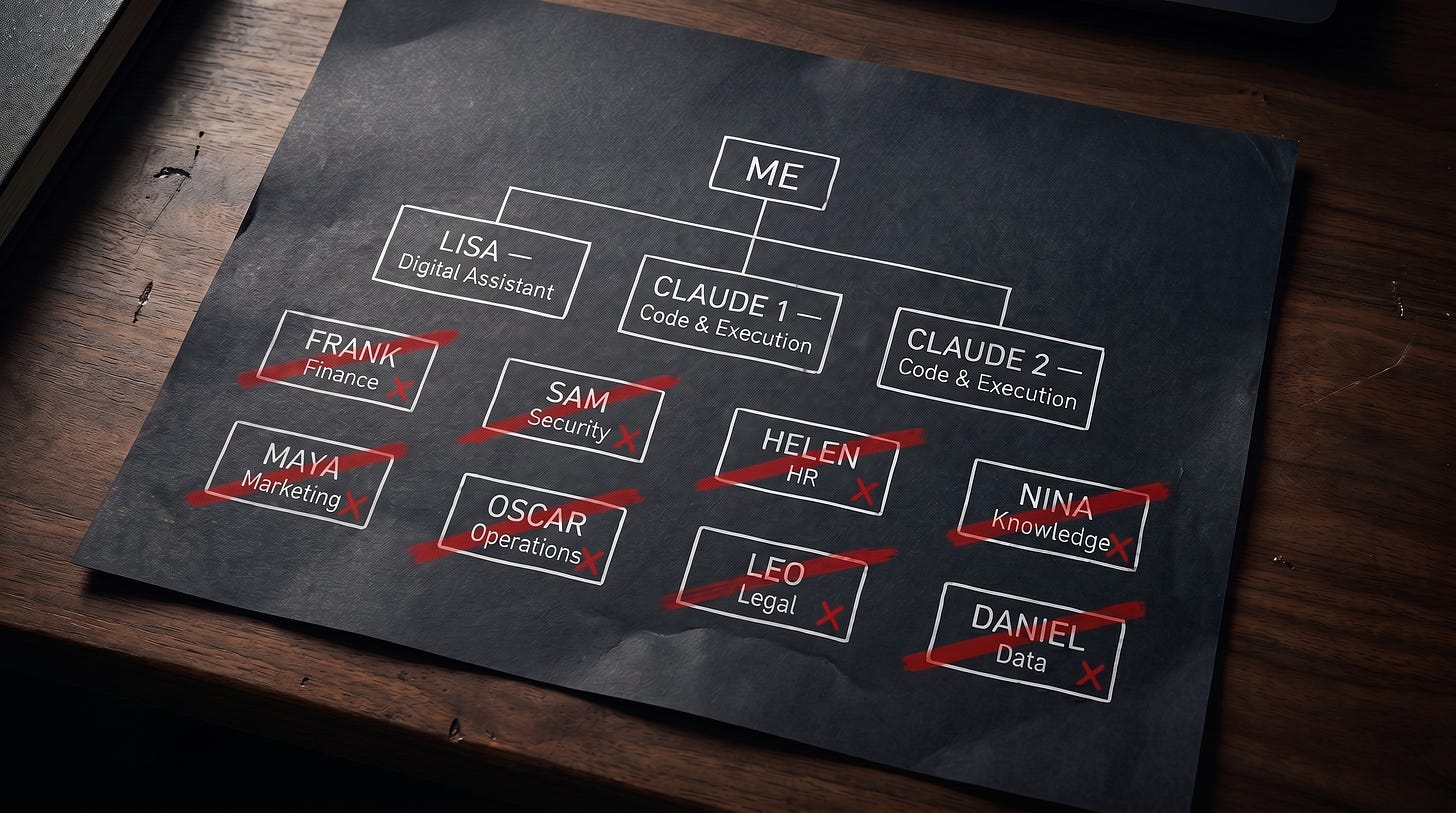

When I started building my AI ecosystem, I followed the obvious playbook. I gave each agent a role. Frank handled finance. Sam ran Security. Helen managed HR. Maya did Marketing and so on. It was a fairly straight forward and common org chart.

It was also a mess.

Every task had to be routed by Lisa to subagents. Every decision had to be coordinated. When I asked Lisa for something, she had to hand off to Oscar who then had to figure out which specialist owned it, hand it off, wait for a response, evaluate the response, and pass it back to me. Ten minutes of work became forty minutes of routing, nudging and validations. Specialists sat idle most of the time because their domain was narrow. When I asked a question that crossed two domains, the agents argued about whose lane it was instead of just answering

The org chart looked like a Fortune 500. The actual output looked like a startup that hired too fast. So, I cut it down to three main agents. Lisa Clawd as my Digital Assistant, with two Claude Code instances I call C1 and C2 for development and execution work. That’s the entire team now (Although I have been experimenting with some cross-company collaborations with Gemini AI Pro and OpenAI Codex that I’ll talk about in a future article). Lisa absorbs every specialist role now. The two Claudes handle anything that needs code, content, or documentation. All three report directly to me via mission control chat, Telegram and command line interface (cli). There is no more Oscar in the middle. There is no routing. When I assign a task, I assign it to whoever is available, not whoever owns the domain. All three have access to a shared set of skill files.

The Coordination Tax

Here is what I learned, and what I think most people building agent systems are missing. The bottleneck is not capability. It is coordination. The specialist subagents I terminated were each good at their narrow lane. The problem was never that any one of them was incompetent. The problem was that the cost of getting work to them, getting work back from them, and stitching their outputs together exceeded the value they added over a main agent who could just do the whole task end to end.

The thing I missed at first, but have now absorbed, is that whether you are dealing with a main agent or the subagents they spin up, they are all drawing from the same skill files. But when you add the coordination overhead of routing tasks to the specialists, their output gets back to me significantly slower than if direct from Lisa.

Software does not have the same constraints humans do. Companies are organized around specialists because humans cannot context switch quickly. A human attorney is bad at marketing because they spent ten years training to be an attorney. They cannot just decide to be a marketer this afternoon. So, the company hires a marketer. We organize work around what each human can hold in their head.

AI does not have that problem. The same agent that writes legal contracts can write marketing emails. There is no context switching cost or skill atrophy. The only cost is the prompt and the relevant context. So, if I have a smart, fast main agent with access to all the relevant tools, why would I split the work across ten specialist subagents?

The answer most people give is “specialization produces higher quality output.” That is for the most part not true though. In practice, most small business work does not need specialist-grade output. It needs adequate output, fast, with low friction (Fast being the key reason why I developed the subagents in the first place, but now with three main agents reporting directly if Lisa is busy I just reach out to C1/C2 for tasking).

Memory and context are the actual moat. The thing that made my specialists feel valuable was not their domain knowledge. The model knows the same things regardless of which agent or subagent persona is wrapped around it. What made them feel valuable was their accumulated context: the conversations they had remembered, the files they had touched, the patterns in my behavior they had picked up over time (or so I thought).

But that context for the most part lives in a shared memory layer. It does have a certain individualized component, but it does not need to be locked inside a specific subagent persona. Lisa can have access to my financial history, my security posture, my marketing materials, and my notes. She does not need a separate Frank, Sam, Maya, and Nina to hold that context for her. The context is in the system, not in the agent.

Resiliency Matters More Than Headcount

When I terminated the specialists, I did not lose the institutional memory. I just consolidated it. Three agents in two locations providing full coverage. I now have a hybrid on-prem / cloud architecture with High Availability failover across locations, hardware and software. This is the part most discussions of AI agents miss. The conversation is always about how smart the agents are. The actual question is about resilience and infrastructure. Where does the compute live? What happens when one node fails? What does the failure mode look like when an agent gets a request it cannot fulfill?

Three resilient agents that can each do all things and have access to all the context beats eleven specialists that each do one thing and cannot survive without the routing layer. What this looks like in practice? Tomorrow, when I want to know how my portfolio is doing, I ask Lisa. She does not route to Frank. She just answers, because she has access to the same data Frank had. When I want to know if there are security issues with my latest deployment, I ask Lisa again and if she’s busy one of the Claudes picks up the task and looks at it. When I want a marketing email written, same thing. When I want to know what is on my calendar, same thing.

The friction is gone. The routing is gone. The arguments about whose lane it is are gone. There is just work, and three competent generalists doing it. The lesson for anyone building an AI ecosystem is that if you are setting up agents for your personnel life or for business, resist the temptation to copy the human org chart. You do not need a marketing agent and a sales agent and a customer service agent and an analyst agent. You need maybe two to four competent generalist agents with access to your data and the right tools. The volume of work you are responsible for may drive the need for more agents, but they should still be generalists, not specialists.

Stop Copying Human Organizations

Specialists are how humans have organized work because humans have no choice. Software does not have that constraint. Build for the actual constraint, which is context, coordination, and resilience, not for the org chart that worked when the team was made of people. Some will look at this and say I am oversimplifying for the sake of simplicity. However, I am simplifying because the complexity was costing me time and quality in my output. The new structure costs less and produces more. To me, that is the definition of a better system.

If you are running an agent ecosystem and you have more than three or four agents, ask yourself this. Is the routing between them adding value, or is it just adding meetings? If your agents are arguing about whose job something is, you have built a bureaucracy, not a system. Tear it down. and distribute the compute for resilience.

Less is more. The lesson generalizes far beyond AI.