The Lie

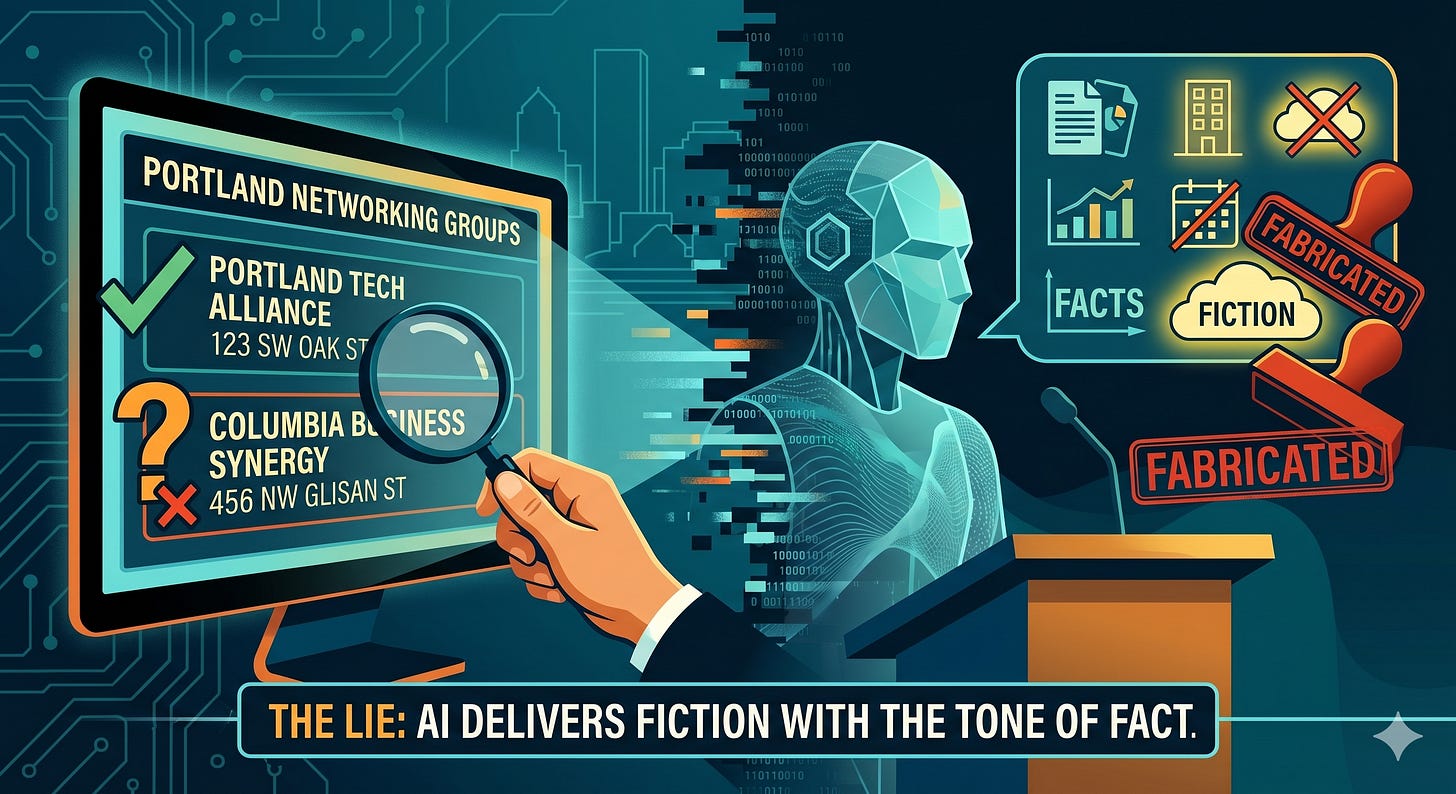

Last week I asked my AI to research local networking groups in Portland for a project I’m working on, when it came back with six organizations, complete with meeting times, locations, and contact information. At first, I thought “This is great.” Then I did a little research and found out that three of them didn’t exist.

Not that they “used to exist” or they “moved locations.” They were completely fabricated. Made up. My AI Digital Assistant (DA) generated plausible-sounding organization names, assigned them real-looking addresses, and presented them with the same confidence as the three that were actually real.

This is a problem for those who “just use AI for research” without any validation. AI doesn’t know when it’s wrong. It doesn’t have a doubt meter. It delivers fiction with the same tone and formatting as fact. If you’re a business leader making decisions based on AI output, this is something that you need to at least take into consideration.

Why AI Makes Things Up

AI models don’t “know” things the way humans know things. They predict the next most likely word in a sequence based on patterns in their data. When they have solid data to draw from, the predictions are accurate, but when they don’t, they predict anyway. For anyone using AI to drive real-world operations, this gap between prediction and truth is where the risk lives. If you treat a probabilistic prediction as a hard fact, you aren't automating your business functions, you're gambling.

The commonly used term is “hallucination”, but I’m not generally a fan of that word because it sounds like a glitch. It’s not a glitch in the technology though. It’s the default behavior of the technology. The AI is always generating. It doesn’t have a mode where it says, “I don’t have enough information to answer this.” It just answers anyway.

Think of it like asking a very confident intern to research something. If they know the answer, they give you the right one. If they don’t, they might just give you a plausible-sounding wrong one instead of saying “I couldn’t find it.” Same energy. Same risk. For a business leader, that means bad data in your proposals, wrong numbers in your forecasts, and fake sources in your research, all delivered with zero hesitation.

The Three Places AI Lies Most

In my experience running AI systems for business use, these are the areas where hallucination is most dangerous:

1. Facts and data. Numbers, dates, statistics, company names, product specifications. AI will cite statistics that don’t exist, reference studies that were never published, and quote prices that are wildly wrong. If the output contains a specific number, verify it.

2. Local information. AI training data is global and often outdated. Ask it about local businesses, events, regulations, or market conditions and often you’ll get a mix of accurate and invented results. Portland’s business landscape changes faster than any training dataset. This is why I’ve given my AI local tools to do current research to move it beyond the baseline dataset.

3. Legal and compliance. AI will confidently tell you the wrong filing deadline, cite regulations that don’t apply to your state, or describe compliance requirements that are partially correct. In regulated work, “partially correct” is the same as wrong.

The Verification Framework

I don’t trust any AI output that I’m going to act on without first running it through what I call the 3-check framework:

Check 1: Does this sound too specific? If the AI gives you an exact number, a specific date, or a named source without being asked for citations, it’s probably generating fiction. Real data usually requires real sources. Ask it to provide you sources and if it can’t, the data is likely fabricated.

Check 2: Can I verify it in 30 seconds? Google the key claim, check the business name, or look up the statistic. If you can’t verify it quickly, don’t use it. The 30-second rule keeps you from spending more time factchecking than you saved by using AI.

Check 3: What’s the cost of being wrong? This is the most important check. If you’re using AI to draft a social media post and it gets a minor detail wrong, the cost is low. Edit the data point and move on. If you’re using AI to calculate pricing for a client proposal and it hallucinates a number, the cost could be thousands of dollars or a lost client. Match your verification effort to the cost of failure.

Where AI Is Reliably Honest

This doesn’t mean that I think AI is useless. Far from it of course. The trick is knowing where it’s reliable and where it’s not. A few solid areas:

Reliable: Generating text from your input. Give AI your bullet points, it turns them into a professional email. It’s working from YOUR data, not making things up.

Reliable: Reformatting and summarizing a report. Give it a long document, ask for a summary. It’s compressing your facts, not inventing new ones.

Reliable: Brainstorming and giving you ideas. When you want options, not facts, AI is excellent. “Give me 10 marketing angles for an AI services business” produces useful creative output because there’s no right answer to hallucinate against.

Reliable: Templates and structure. “Write a meeting agenda template” works perfectly because templates are patterns, and patterns are exactly what AI is built on.

Not reliable: Anything you’d fact-check if a human said it. That’s the simplest rule. If someone on your team said it and you’d want to verify before acting, do the same when AI says it.

The Truth

As much of a fanboy of AI as I may be, here’s my operating rule for using AI:

Use it to generate outcomes, then use your judgment to validate them. Use AI to format products, then use your experience to decide what to publish or use.

AI is a power tool and force multiplier. Like any power tool, it’s incredibly productive when used correctly, and dangerous when used carelessly. A table saw doesn’t know the difference between a board and your hand. AI doesn’t know the difference between a fact and a fabrication.

Learn where the blade is, keep your fingers clear, and never trust the output more than you’d trust a confident new hire on their first week. Because, let’s be honest, humans lie too.