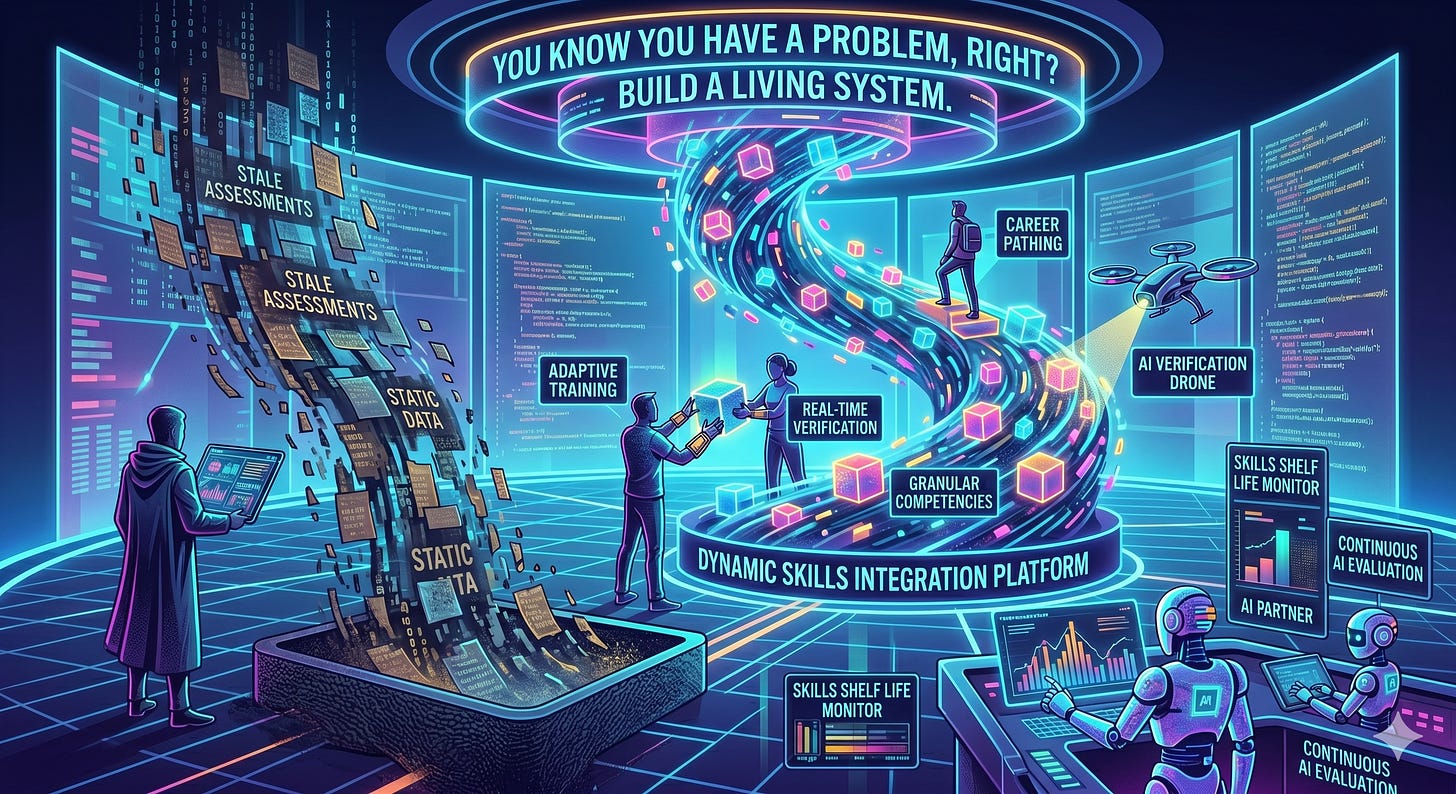

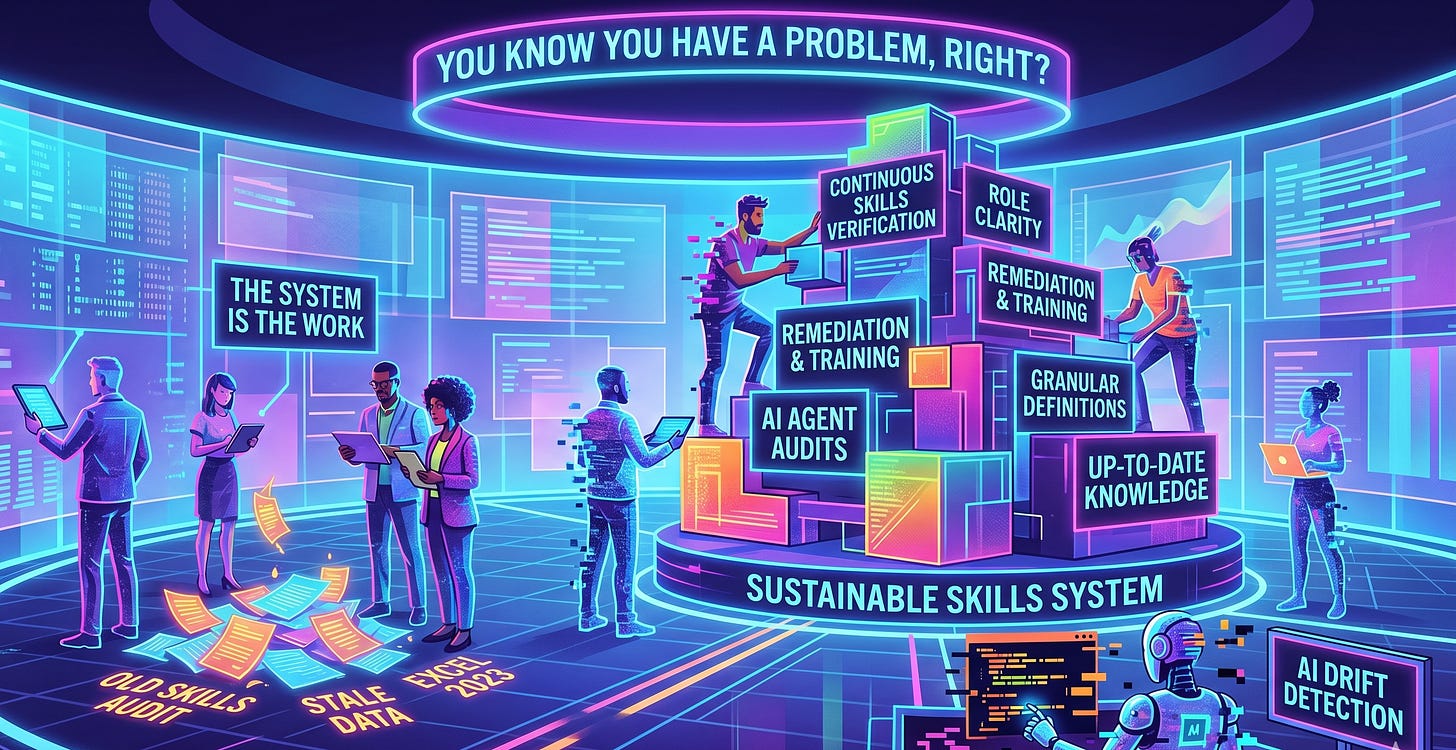

You Know You Have a Problem, Right?

Most businesses know they have a skills problem. They can feel it when projects take longer than they should, the same three people get pulled into everything and new tools get adopted halfway and then abandoned. Institutional knowledge lives in one person’s head, and everyone quietly hopes that person does not leave.

So, someone decides to run an audit where they map out what their team can actually do versus what the work requires, identify the gaps, and produce a report. That part feels productive because they have a deliverable with data and they feel like they did something.

But then nothing happens. Or worse, something happens for two weeks and then fades.

The audit is not the hard part. It’s running the system that keeps the gaps from reopening that is the hard part. That is where most organizations fail, and it is worth talking about why.

Skills Have a Shelf Life

The most dangerous assumption you can make after a skills audit is that the results are permanent. They aren’t, because a skills assessment is a dated document. It tells you what your team could do on the day you tested them, but it says nothing about whether those capabilities still match the work six months later.

This is not a failure of the audit. It is the nature of how work evolves, tools change and processes get updated. A role that required Excel proficiency in January might require Python in June. The person who was your best project manager in Q1 might be underwater in Q2 because the scope of the projects changed, not because they got worse at their job.

The practical response is to treat every assessed skill with an annual review. Some skills are stable and need annual checks. Some are volatile and need quarterly reassessment or sooner, depending upon the velocity of change in the environment Some skill should even be flagged for immediate re-evaluation when the tools or context around them change significantly.

This creates administrative work, with no way around that. But the alternative is a skills inventory that quietly becomes fiction, and an outdated skills inventory is worse than not having one at all. It gives you confidence that is not backed by reality, and decisions made on false confidence tend to be expensive.

The rule that generally holds up: a capability assessment that does not reflect current conditions is a liability dressed up as a credential or certification.

The Uncomfortable Part: What Happens When Someone Falls Short

Every skills system eventually produces a result that nobody wants to deal with. Someone who was strong in an area six months ago is no longer meeting the bar. So, the instinct in many organizations is to flinch, soften the criteria, or simply reclassify the skill while quietly stopping the testing in that area.

It shouldn’t need to be said, but this is the wrong call every time. Not because it is soft, but because it breaks the integrity of the entire system. If people figure out that underperformance gets quietly absorbed rather than addressed, the assessment process becomes office theater. Nobody takes it seriously because there are no real consequences for ignoring or underperforming the requirement.

The better approach is straightforward. First, document it clearly: what was tested, what the result was, what specifically fell short. No ambiguity, no qualifiers, no softening the language to spare feelings. Second, figure out whether this is a skill gap or a scope gap. A skill gap means the person (or AI agent) needs training, practice or guidance in a specific capability. A scope gap means the role has outgrown its original definition, and the standard itself needs updating because everyone would fall short, not just this persona. Third, set a remediation plan with a specific retest date.

This is not punishment. It is simply good management. The same way you would not ignore a server that started throwing errors just because it worked fine last quarter, you cannot ignore a capability gap just because the person used to be competent in that area and now they no longer are.

Clean documentation and honest assessment are not adversarial. They are the foundation of a team that actually improves over time instead of one that just assumes it is improving.

The Scope Creep Problem

One of the things that surfaces after an audit is that many skills are poorly defined in the first place. You tested someone on “project management” but what does that actually mean? Does it mean they can run a standup? Build a timeline? Manage a budget? Handle escalations with clients? Coordinate across three departments with competing priorities?

When the skill definition is vague, the assessment is vague, and the results are vague. You end up with a spreadsheet full of green checkmarks that do not actually tell you whether someone can do the specific work you need them to do tomorrow.

The fix is to get granular before you assess. Break “project management” for example, into the five or six specific things your organization actually needs a project manager to do. Test those individually. A person might be excellent at timelines and terrible at client escalation. That distinction matters, whereas a single “pass” on “project management” would hide the relevant sub-skills needed to fully do the job.

This level of specificity feels like overkill when you are setting up the system. It does not feel like overkill the first time you assign someone to a project based on a green checkmark and discover they cannot do the specific part of the job you needed them for.

Build the Standard Before You Deploy the Skill

If there is one thing worth doing differently from the start, it is this: define what good looks like before someone even starts doing the work. Most teams add their capabilities first and then figure out how to evaluate them later. A new tool gets adopted, or a new process gets rolled out. A new responsibility gets added to someone’s plate and the evaluation criteria trail behind by weeks or months. During that gap, the skill exists in practice but not on paper, so nobody knows what the minimum bar is because nobody wrote one down.

This creates two problems. First, the person doing the work has no clear target to hit, so they calibrate to their own judgment of “good enough,” which may or may not match what the organization actually needs. And second, the person evaluating the work has no objective standard to measure against, so the evaluation becomes subjective and inconsistent.

Treat the skill definition like a specification and write it before you deploy it, not after. If you cannot describe what competency looks like before someone starts doing the work, you probably do not have enough clarity on the work itself. That is worth discovering before you assign it, not after.

Why Most Skills Systems Die

The number one reason skills management systems fail is not that the initial audit was bad. It is that nobody plans for or thinks about the ongoing maintenance.

Running an audit is a project in and of itself. It has a start date and an end date, and you can put it on a roadmap and check off the milestones. Maintaining the system after that is not a project, but rather an ongoing operational responsibility with no finish line. Most organizations are decent or better at setting things up, few are set up to sustain open-ended operational work that does not directly produce revenue.

Skills audits may get run once a year in the average organization if they are on top it if. But even then, the results often sit in a shared drive, while the skills change faster than the documentation gets updated. Within six months, they are back to the same problem they had before the audit, except now they also have a stale spreadsheet that makes people feel like the problem was already addressed.

The organizations that actually make skills management work treat it like they treat security or compliance. Not as a project that gets done, but as a function that gets staffed and baked into processes. Someone actually owns the task, the cadence and the outcome because it has tools and accountability assigned.

That is more overhead than most small and mid-size businesses want to hear about. But the alternative is the cycle that most of them are already stuck in: audit, feel good, drift, realize things are broken again, audit again.

The audit was the easy part because it had a clear endpoint. It’s everything after it that is the actual work. The organizations that accept that and build for it are the ones that actually close their skills gaps. Everyone else just documents them periodically and hopes for the best.

Do you Have a System or a Series of Liabilities?

Last week I introduced you to Helen, my AI Head of HR, where she talked about pretty much all of the above over two days as it applies to her AI environment. There are a lot of similarities between setting up and managing an organization IRL and doing the same in an AI environment. The risk is different with AI though. In a regular org, if someone's skills drift, you have time to notice. With an agent, that drift happens instantly. A model update or a prompt change can break a core capability in a millisecond. The output still looks professional. The formatting is still perfect. But the logic is gone.

That’s why a skills audit for AI isn't just an HR exercise. It's an ongoing technical requirement, because if you aren’t continuously verifying that your agents can actually do the work you assigned them, you don't have a system. You have a series of liabilities just waiting to fail you at the worst possible moment.